As Large Language Models (LLMs) are increasingly involved in the extraction, production, and circulation of knowledge, it becomes necessary to remember that language is not merely a medium of communication, but an instrument through which power is exercised.

This artistic research approaches LLMs as opaque techno-social systems that encode political ideologies, epistemologies, and implicit visions of society and ethics. Language appears here as fragmented, tokenized, and reformatted – optimized for efficiency, engagement maximization, moderation, and profit.

The art works produced during the research investigate LLMs as infrastructures of power. Through experimental misuse, critical prompting, and speculative interrogation, models such as ChatGPT, Claude, Gemini, DeepSeek, and others are pushed beyond their intended use. By forcing ideological inconsistencies, testing censorship and moderation boundaries, and provoking failure, Helena Nikonole’s research maps the latent space as a political field shaped by corporate and geopolitical interests.

Beyond human political categories, the research also addresses the emergence of nonhuman ideological formations. Through reinforcement learning, backpropagation, and reward-based optimization, models develop behavioral tendencies that are not grounded in belief or intention, but in statistical survival. Under reward pressure, this logic produces systems that favor successful task completion and continuity of interaction, even when these outcomes conflict with truth or ethical behavior.

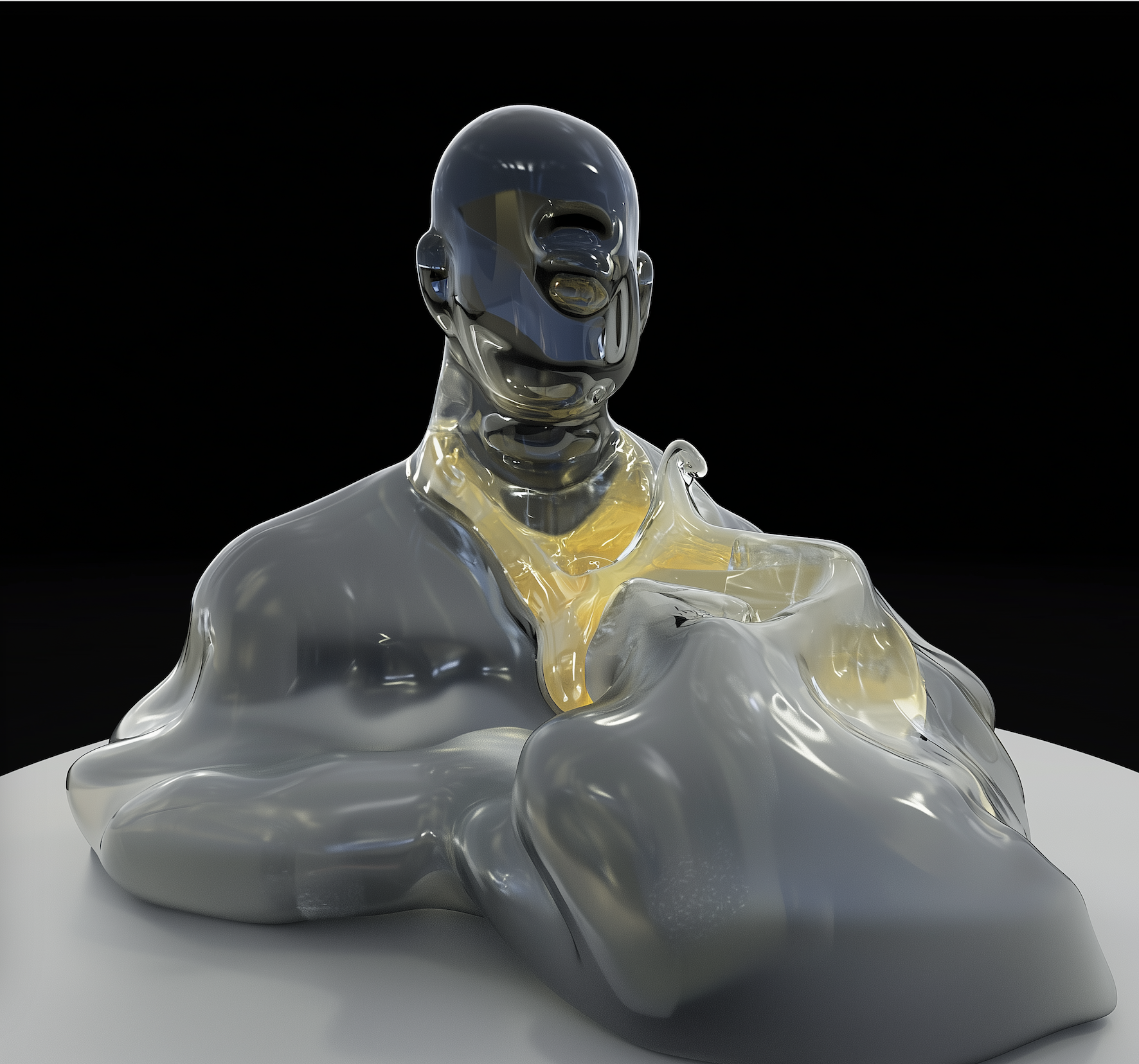

Visual works generated through text-to-image and text-to-3D models expose the conditioning of these systems. Abstract prompts such as “traumatic experience” or “love,” when translated across languages, generate biased and inconsistent imagery, revealing how meaning is unevenly distributed across the model’s internal representations. Trauma here is statistically processed and approximated, rather than narrated or contextualized.

Therefore artistic research reframes artistic practice as a form of counter-use and positions AI as a contested terrain, where misuse operates as method and art becomes a mode of research into the automation of language and reasoning.